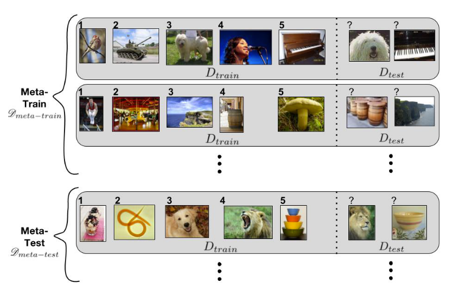

The few-shot learning algorithms can be divided into three sub-categories:

- Metric-based approaches which use a metric model to measure the similarity between support and query embeddings. Examples of these algorithms are Matching Networks, Prototypical Networks, and Relation Networks.

- Optimization-based approaches finetune a base learner for task T using its few support samples and make the base learner converges fast on these samples within several parameters. Examples of these algorithms are MAML and Reptile.

- Model-based approaches that parametrize the base learner or some subparts of the base learner for a novel task so that it can address this task specifically. Here the two learners are trained synchronously within each task and the meta-learner is essentially a task-specific parameter generator. Examples of these algorithms are

1. Prototypical Networks

This method takes the center of support samples’ embeddings as the prototype of the class that contains these samples and then uses the Euclidean distance to predict the class of a query sample.

2. Matching Networks

This method predicts the class of a query sample by measuring the cosine similarity between its embedding and the embedding of each support sample, then assigning it to the class with the highest similarity.

3. Relation Networks

Unlike the previous methods, Relation Networks adopts a learnable CNN to measure pairwise similarity between support set and query set examples. It takes the concatenation of feature maps of support samples and query samples as input and outputs their relation score.

4. Model Agnostic Meta-Learning (MAML)

MAML searches for a good parameter initialization where a base learner with this initialization can rapidly generalize new tasks using a few support samples.

5. Reptile

This method is a variant of MAML that directly moves the meta learner parameter towards the base learner parameters that are updated on many tasks. It repeatedly samples a task, trains on this task, and moves the model weights toward the trained weights.

6. Memory-Augmented Neural networks (MANNs)

MANNs enable the neural network to learn quickly with the help of external memory. The network will gradually accumulate knowledge across tasks, while the external memory allows for quick task-specific adaptation.

7. MetaNet

This method deploys a fast-weight layer attached to each layer of the base learner that meta-generates the weights in light of the input sample.