The purpose of this tutorial is to build a complete machine learning system that will classify text based on its sentiment. This system will consist of three main components. First, we will create a machine learning model that will be responsible for making predictions based on the provided text. Following that, we will build a backend that will host the trained model and provide an API endpoint to access it. Finally, we will design a frontend application that will interact with our model through the API and return the model’s prediction to the user. Now, let’s dive into the first part and create our machine learning model.

If you’re someone who prefers to dive right into the code, I’ve got you covered! You can find the entire project in the linked repository. Feel free to clone, fork, or download the repo and start your project. If you have any questions or if there’s something you’d like to discuss, just drop a comment — I’m always eager to help! Happy coding!

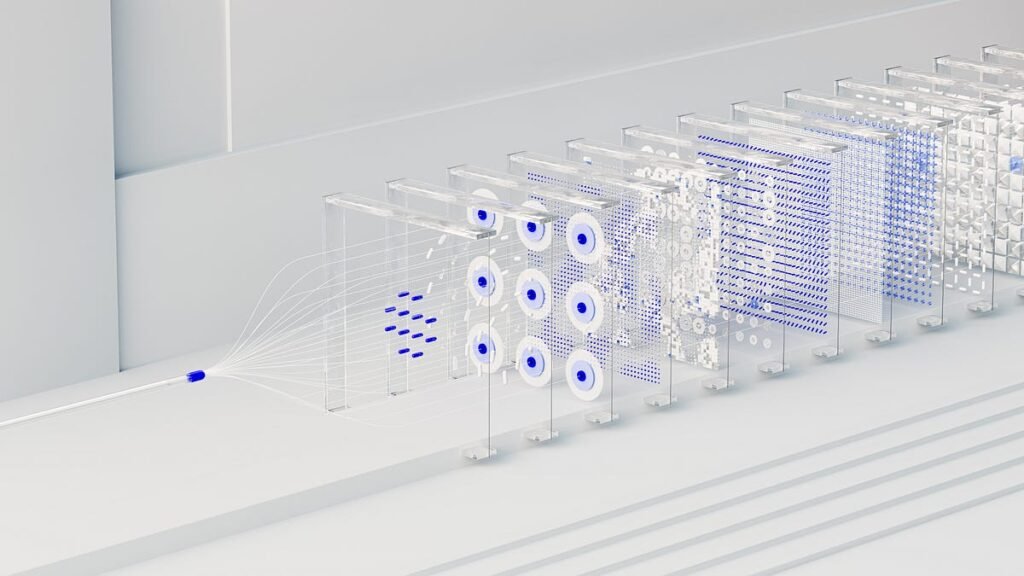

Sentiment analysis is a common task in the field of Natural Language Processing (NLP) where the purpose is to determine whether the sentiment of a piece of text is positive, negative, or neutral. Our goal here is to build a machine learning model that can effectively perform this task.

For this purpose, we will be leveraging PyTorch Lightning, a powerful library designed to simplify and expedite the machine learning model development process. PyTorch Lightning is essentially a lightweight PyTorch wrapper that takes the existing PyTorch code and adds a handful of features aimed at standardizing the training process, reducing boilerplate code, and making it more readable and shareable. It enables developers to focus on designing models and experiments while taking care of most of the engineering details.

While this is not a mandatory step it is always beneficial to structure our machine learning projects in a way that is easily understandable and extendable.

The folder structure we will use is as follows:

.

├── .data

├── .experiments

└── src

|

└── ml

├── data

├── datasets

├── engines

├── models

├── scripts

└── utils

Here’s a brief rundown of the organizational structure we’re employing. The .data directory stores all our dataset files, providing a dedicated place for all raw and processed data. Next, we have the .experiments directory, which is designed to contain the outcomes of various model training runs, making it easy to compare different model versions and track progress. Finally, all our source code related to the machine learning module resides within the src/ml directory which is further grouped into related components within this directory.

Given that our project consists of multiple components including the backend and the frontend (topics for next posts) it is crucial to maintain a clean separation of the different modules. This strategy enables us to develop each component independently, while also minimizing interdependencies. If you want to learn more about this structure, I strongly encourage you to read this post. It provides an in-depth explanation of the role of each directory in the overall project setup.

Before diving into the implementation, it’s crucial to establish some basic concepts that are needed in almost every NLP task. If you’re already well-versed in these concepts, feel free to move ahead to the first phase of our sentiment analysis pipeline — data preparation. However, if you’re interested in reinforcing your understanding or just refreshing your memory, read on for a brief overview of these concepts.

Understanding the Role of Vocabulary

The first key concept we need to grasp for NLP tasks is the idea of a “vocabulary”. A vocabulary is nothing more than a collection of unique words from our dataset that allows us to transform unstructured textual data into a structured numerical representation that machine learning models can understand and process. Each word in a vocabulary is associated with a unique index, creating a one-to-one correspondence between the words in our language and their numerical counterparts.

The Power of Word Embeddings

While assigning unique numbers to words enables machine learning models to process text, this method does little to capture the semantic meanings and relationships between words. That’s where word embeddings come into play. Word embeddings are multi-dimensional vector representations of words that capture the semantic and syntactic similarities among words and not just their unique identifiers. In the vector space of word embeddings, words with similar meanings are located closer to each other, while words with different meanings are further apart. For instance, the words ‘king’ and ‘queen’ would be closer to each other in this vector space than ‘king’ and ‘apple’. This semantic mapping enables machine learning models to understand the relationships between words in a sentence, making them more capable of solving NLP-related tasks.

In our project for our sentiment analysis task, we will use pre-trained word embeddings. These are vectors that have been trained on large text corpora like Wikipedia or Twitter, allowing them to capture extensive semantic and syntactic relationships. By using these pre-trained embeddings, we’re essentially leveraging the power of these language models, to achieve both better performance and faster training times for our model.

Getting the data

The first step in any machine learning project is to gather and preprocess our data. In this project, we’ll use the Sentiment140 dataset from Kaggle, which contains tweets extracted using the Twitter API labeled as positive or negative.

To download and utilize the dataset, we first need to log in to Kaggle and create a token. This token allows us to interact programmatically with Kaggle’s API. Note that the dataset can also be downloaded manually without a token but it is recommended to use it in order to avoid manual steps and to ensure reproducibility.

The steps to accomplish this are as follows:

- Sign in to our Kaggle account and navigate to account settings

- Navigate to the ‘API’ section and click on ‘Create New Token’ which will download a file named

kaggle.json

3. Place the kaggle.json file in the specified location based on our OS:

- Linux:

~.kagglekaggle.json - Windows:

C:Users<Windows-username>.kagglekaggle.json

By following the steps above, we can now access Kaggle’s API and are ready to download and use the dataset in our project.

To set up the dataset for our project, we’ll be using the script shown below:

# src/ml/data/make_dataset.pyimport os

import pandas as pd

from kaggle import KaggleApi

from sklearn.model_selection import train_test_split

from ml.utils.constants import RAW_DATA_DIR, PROCESSED_DATA_DIR

def make_dataset(split: float = 0.2, frac: float = 0.05):

# https://www.kaggle.com/datasets/kazanova/sentiment140

api = KaggleApi()

api.authenticate()

# Download the dataset from Kaggle

api.dataset_download_files('kazanova/sentiment140', path=RAW_DATA_DIR, unzip=True)

# Create train and test sets

raw_files = os.listdir(RAW_DATA_DIR)

if len(raw_files) > 1:

raise ValueError(f"More than one raw data files in {RAW_DATA_DIR}")

raw_data_file = os.path.join(RAW_DATA_DIR, raw_files[0])

if not os.path.isfile(raw_data_file):

raise ValueError(f"{raw_data_file} is not a file")

# load downloaded data and create train and test sets

data = pd.read_csv(os.path.join(RAW_DATA_DIR, raw_data_file),

names=["target", "ids", "date", "flag", "user", "text"],

encoding="ISO-8859-1")

# keep only a portion of the data for faster training

data = data.sample(frac=frac, random_state=0)

data = data[["text", "target"]]

data["target"] = data["target"].replace(4, 1)

train, test = train_test_split(data, test_size=split, random_state=0)

if not os.path.exists(PROCESSED_DATA_DIR):

os.mkdir(PROCESSED_DATA_DIR)

train.to_csv(os.path.join(PROCESSED_DATA_DIR, "train.csv"), index=False, encoding="ISO-8859-1")

test.to_csv(os.path.join(PROCESSED_DATA_DIR, "test.csv"), index=False, encoding="ISO-8859-1")

What this script essentially does is download the raw dataset, split it to train and test datasets, and finally save it in a specific location for further usage. Note that we use only a small fraction of the dataset for the purpose of the tutorial.

Defining the Dataset

In PyTorch, a Dataset is a handy tool that lets us organize our data in an easy-to-use format. When we create a Dataset, we basically tell PyTorch how to get data and its corresponding label. This involves creating a class and defining two key methods: __len__ and __getitem__. The __len__ method tells PyTorch how many data samples we have, while the __getitem__ method tells PyTorch how to get the n-th data sample.

For our sentiment analysis task, we will create a Dataset that tells PyTorch how to access our tweet text and its sentiment label. The Dataset creation script can be seen below:

#src/ml/datasets/tweet_dataset.pyfrom pandas import DataFrame

from torch.utils.data import Dataset

class TweetDataset(Dataset):

def __init__(self, data: DataFrame):

self.data = data

self.tweets = list(data.text)

self.labels = list(data.target)

def __len__(self):

"""Denotes the total number of samples"""

return len(self.data)

def __getitem__(self, index):

return {'tweet': self.tweets[index],

'label': self.labels[index]}

Managing data processes with LightningDataModule

In the vast majority of machine learning projects, data cleaning, preparation, and preprocessing are tasks that are often scattered across multiple locations and files. As the project expands, this can lead to concerns such as difficulty in tracking changes applied to our data and maintaining consistency in data handling.

That is where PyTorch Lightning’s DataModule comes to the rescue by encapsulating all data-related processes. The DataModule provides a high-level abstraction over the data pipeline and allows us to encapsulate all the complex data procedures from data preparation, splitting, and processing, to creating PyTorch Dataset and DataLoader objects into a single class that can be easily shared, reused, and tested. This ensures that our data pipeline is robust and that our machine learning model can focus on learning from the data, not managing it.

Our LightningDataModule is defined as shown below:

#src/ml/datasets/tweet_datamodule.pyimport os

import pandas as pd

from lightning.pytorch import LightningDataModule

from sklearn.model_selection import train_test_split

from torch.utils.data import DataLoader

from ml.data.make_dataset import make_dataset

from ml.data.preprocessing import preprocess

from ml.datasets.tweet_dataset import TweetDataset

from ml.utils.constants import PROCESSED_DATA_DIR

class TweetDataModule(LightningDataModule):

def __init__(self,

batch_size: int = 32,

split: float = 0.1,

recreate_data: bool = False):

super().__init__()

self.batch_size = batch_size

self.split = split

self.recreate_data = recreate_data

self.has_setup = False

def prepare_data(self):

if self.recreate_data or not os.path.exists(PROCESSED_DATA_DIR) or os.listdir(PROCESSED_DATA_DIR) == 0:

make_dataset()

def setup(self, stage: str):

# Assign train/val datasets for use in dataloaders

if stage == "fit":

if not self.has_setup:

self.has_setup = True

data = self.process_data(stage)

# split data

self.train_data, self.val_data = train_test_split(data, test_size=self.split, random_state=0)

self.train_dataset = TweetDataset(self.train_data)

self.val_dataset = TweetDataset(self.val_data)

if stage in (None, "test", "predict"):

self.test_data = self.process_data(stage)

self.test_dataset = TweetDataset(self.test_data)

def train_dataloader(self):

return DataLoader(self.train_dataset, batch_size=self.batch_size, shuffle=True)

def val_dataloader(self):

return DataLoader(self.val_dataset, batch_size=self.batch_size)

def test_dataloader(self):

return DataLoader(self.test_dataset, batch_size=self.batch_size)

def predict_dataloader(self):

return DataLoader(self.test_dataset, batch_size=self.batch_size)

def process_data(self, stage: str):

file_name = None

if stage == "fit":

file_name = "train.csv"

if stage in (None, "test", "predict"):

file_name = "test.csv"

if file_name is None:

raise ValueError(f"Stage {stage} is not valid.")

data = pd.read_csv(os.path.join(PROCESSED_DATA_DIR, file_name), encoding="ISO-8859-1")

data["text"] = data.text.progress_apply(lambda x: preprocess(x))

return data

Let’s break down each method:

__init__: The constructor of the class. It takes can take any number of parameters and is used to initialize our DataModule.prepare_data: This method is called first in the data processing pipeline. It is responsible for running one-time processes like ourmake_datasetfunction for downloading and splitting data into train and test datasets.setup: This method is responsible for preparing the datasets for training, validation, and testing. This is where all data operations such as preprocessing, splitting, and dataset creation should be made.train_dataloader,val_dataloader,test_dataloader, andpredict_dataloader: These methods return DataLoaders for the corresponding datasets. A DataLoader is a PyTorch utility that helps iterate over the dataset in batches.process_data: A helper function that loads the data and applies preprocessing to the tweets of the dataset. The preprocessing steps applied can be seen below and they can also be extended to include further preprocessing steps.

# src/ml/data/preprocessing.pyimport re

def preprocess(x):

# Make text lower case

x = x.lower()

# Remove tags of other people

x = re.sub(r"@w*", " ", x)

# Remove special characters

x = re.sub(r"#|^*|*$|"|>|<|<3", " ", x)

x = x.replace("&", " and ")

# Remove links

x = re.sub(r"ht+p+s?://S*", " ", x)

# Remove non-ascii

x = re.sub(r"[^x00-x7F]", " ", x)

# Remove time

x = re.sub(r"((a|p).?m)?s?(d+(:|.)?d+)s?((a|p).?m)?", " ", x)

# Remove brackets if left after removing time

x = re.sub(r"()|[]|{}", " ", x)

# For words we want to keep at least two occurences of

# each word(e.g not change good to god)

x = re.sub(r"([a-z])1+", r"11", x)

# Remove any string that starts with number

x = re.sub(r"d[w]*", " ", x)

# Remove all special characters left

x = re.sub(r"[^a-zA-Z0-9 ]", "", x)

# Remove single letters that left except i and a

x = re.sub(r"s[b-gj-z]s", " ", x)

# Remove multiple space chars

x = " ".join(x.split()).strip()

return x

By using Lightning’s DataModule, we’re keeping all the tasks related to handling data in one place, making our code easier to understand and manage. Having now established our data pipeline, it is time to transition to the steps necessary for constructing our sentiment analysis model.

Building the Model

PyTorch provides a flexible and intuitive framework for defining our machine learning models. When we create a model, we define a class that inherits from nn.Module. Within this class, we initialize the layers we will be using in the __init__ method and define how the data will pass through our model in the forward method.

In our case, which involves a sentiment analysis task we will use an LSTM (Long Short Term Memory) model as these models are especially suited to dealing with sequential data like text, where the order of words plays a significant role in determining sentiment. Our model can be seen below:

# src/ml/models/lstm.pyimport torch

import torch.nn.functional as F

from torch import nn

class LSTMClassifier(nn.Module):

def __init__(

self,

embeddings: torch.Tensor,

lstm_hidden_size: int = 128,

lstm_num_layers: int = 1,

mlp_hidden_sizes: list = [128],

dropout: float = 0.2,

):

super().__init__()

self.embedding = nn.Embedding.from_pretrained(torch.FloatTensor(embeddings), freeze=True, padding_idx=0)

self.lstm = nn.LSTM(

embeddings.shape[1], lstm_hidden_size, batch_first=True, dropout=dropout, num_layers=lstm_num_layers

)

# Initialize the MLP input and hidden layers dynamically from the mlp_hidden_sizes array

self.mlp = nn.ModuleList()

self.mlp.append(nn.Linear(lstm_hidden_size, mlp_hidden_sizes[0]))

for i in range(len(mlp_hidden_sizes) - 1):

self.mlp.append(nn.Linear(mlp_hidden_sizes[i], mlp_hidden_sizes[i + 1]))

# Initialize the output layer

self.mlp.append(nn.Linear(mlp_hidden_sizes[-1], 1))

def forward(self, x):

seq, mask, lengths = x[0], x[1], x[2]

emb = self.embedding(seq)

packed_embedded = nn.utils.rnn.pack_padded_sequence(emb, lengths.cpu(), batch_first=True, enforce_sorted=False)

x, (hidden, _) = self.lstm(packed_embedded)

# ignore the output length of the sequences from unpacking

x, _ = torch.nn.utils.rnn.pad_packed_sequence(x, batch_first=True)

x = F.relu(self.mlp[0](hidden[-1]))

for layer in self.mlp[1:-1]:

x = F.relu(layer(x))

x = F.dropout(x, p=self.dropout)

x = self.mlp[-1](x)

return x

@property

def name(self):

return self.__class__.__name__

Preparing Text for the Model

Our model receives as input the pre-trained embeddings we have chosen based on the vocabulary of our data. In order for our model to be able to map the raw text data to the correct embeddings it needs to be encoded in a numerical format that it can understand. That’s where tokenization comes in.

The first step of tokenization involves dissecting the input text into individual elements, or ‘tokens’. In most cases, these tokens represent words, but they can also be phrases, sentences, or other text subdivisions. Once the text is tokenized, the next step is to encode these tokens. During this phase, each unique token in our vocabulary is assigned a unique numerical identifier.

The tool that accomplishes these two tasks is usually called the tokenizer. It serves as a sort of wrapper class around our dataset’s vocabulary. When given an input sentence, it tokenizes the sentence, then encodes the resulting tokens based on our vocabulary. The output is a sequence of numerical identifiers representing the input sentence. These sequences are then passed to our LSTM model which can correctly retrieve the corresponding embeddings of the words in our vocab.

Our tokenizer implementation can be seen below:

# src/ml/utils/tokenizer.pyimport json

import logging

import os

import torch

from torchtext.data import get_tokenizer

from tqdm import tqdm

class Tokenizer:

def __init__(self):

self.vocab = {}

self.inv_vocab = {}

self.tokenizer = get_tokenizer('basic_english')

self.special_tokens = ['<pad>', '<unk>', '<sos>', '<eos>']

def fit_on_texts_and_embeddings(self, sentences: list[str], embeddings):

# Add special tokens to the start of the vocab

for special_token in self.special_tokens:

self.vocab[special_token] = len(self.vocab)

# Add each unique word in the sentences to the vocab if there is also an embedding for it

for sentence in tqdm(sentences):

for word in self.tokenizer(sentence):

if word not in self.vocab and word in embeddings.stoi:

self.vocab[word] = len(self.vocab)

self.inv_vocab = {v: k for k, v in self.vocab.items()}

# create an embbeding matrix from a set of pretrained embeddings based on the vocab

def get_embeddings_matrix(self, embeddings):

# Create a matrix of zeroes of the shape of the vocab size

embeddings_matrix = torch.zeros((len(self.vocab), embeddings.dim))

# For each word in the vocab get its index and add its embedding to the matrix

for word, idx in self.vocab.items():

if word in self.special_tokens:

continue

if word in embeddings.stoi:

embeddings_matrix[idx] = embeddings[word]

else:

raise KeyError(f"Word {word} not in embeddings. Please create tokenizer based on embeddings")

# Initialize the <pad> token with the mean of the embeddings of the vocab

embeddings_matrix[1] = torch.mean(embeddings_matrix[len(self.special_tokens):], dim=0)

# Initialize the <sos> and <eos> tokens with the mean of the embeddings of the vocab

# plus or minus a small amount of noise to avoid them matching the <unk> token

# and avoiding having identical embeddings which the model can not distinguish

noise = torch.normal(mean=0, std=0.1, size=(embeddings.dim,))

embeddings_matrix[2] = torch.mean(embeddings_matrix[len(self.special_tokens):] + noise, dim=0)

embeddings_matrix[3] = torch.mean(embeddings_matrix[len(self.special_tokens):] - noise, dim=0)

return embeddings_matrix

# add start of sentence and end of sentence tokens to the tokenizer sentence

def add_special_tokens(self, tokens):

return ["<sos>"] + tokens + ["<eos>"]

# convert a sequence of words to a sequence of indices based on the vocab

def convert_tokens_to_ids(self, tokens):

return [self.vocab.get(token, self.vocab['<unk>']) for token in tokens]

def pad_sequences(self, sequences, max_length=None):

# Pads the vectorized sequences

# If max_length is not specified, pad to the length of the longest sequence

if not max_length:

max_length = max(len(seq) for seq in sequences)

# Create a tensor for the lengths of the sequences

sequence_lengths = torch.LongTensor([min(len(seq), max_length) for seq in sequences])

# Create a tensor for the sequences with zeros

seq_tensor = torch.zeros((len(sequences), max_length)).long()

# Create a tensor for the masks with zeros

seq_mask = torch.zeros((len(sequences), max_length)).long()

# For each sequence add the values to the seq_tensor

# and add 1s to the seq_mask according to its length

for idx, (seq, seq_len) in enumerate(zip(sequences, sequence_lengths)):

# truncate the sequence if it exceeds the max length

seq = seq[:seq_len]

seq_tensor[idx, :seq_len] = torch.LongTensor(seq)

seq_mask[idx, :seq_len] = torch.LongTensor([1])

return seq_tensor, seq_mask, sequence_lengths

# split the text into tokens

def tokenize(self, text):

return self.tokenizer(text)

def encode(self, texts, max_length=None):

if isinstance(texts, str):

texts = [texts]

sequences = []

for text in texts:

tokens = self.tokenize(text)

tokens = self.add_special_tokens(tokens)

ids = self.convert_tokens_to_ids(tokens)

sequences.append(ids)

seq_tensor, seq_mask, sequence_lengths = self.pad_sequences(sequences, max_length)

return seq_tensor, seq_mask, sequence_lengths

def __call__(self, texts, max_length=None):

return self.encode(texts, max_length)

Streamlining the Training Process with Lightning Module

Once we’ve defined our model, the next step is to set up the training process. This involves defining how the data is fed to the model, calculating the loss, updating the model’s weights, and then evaluating the model’s performance.

While all these steps can be done using raw PyTorch, it can lead to repetition or even impose logical errors, especially when dealing with complex models and workflows. That is where PyTorch Lightning steps in, by providing the LightningModule class that encapsulates all aspects of the training logic into a single class similar to LightningDataModule. This class enables us to define the methods for the training step, validation step, test step, and configuring the optimizer while also abstracting away repetitive steps such as backward propagation and weight updates thus making our code cleaner and easier to understand. Furthermore, it makes it incredibly straightforward to experiment with different models and tune hyperparameters, thus enhancing the overall machine learning experimentation process.

The LightningModule for our sentiment analysis classifier looks as follows:

# src/ml/engines/system.pyfrom lightning.pytorch import LightningModule

from torch import nn

from torch.optim import Adam

from torchmetrics.classification import BinaryAccuracy

from ml.utils.tokenizer import Tokenizer

class TweetSystem(LightningModule):

def __init__(self,

model: nn.Module,

tokenizer: Tokenizer,

learning_rate=1e-3,

):

super().__init__()

self.learning_rate = learning_rate

self.model = model

self.tokenizer = tokenizer

self.criterion = nn.BCEWithLogitsLoss()

self.train_accuracy = BinaryAccuracy()

self.val_accuracy = BinaryAccuracy()

self.test_accuracy = BinaryAccuracy()

def forward(self, x: list[str]):

input = self.tokenizer(x)

input = tuple([i.to(self.device) for i in input])

return self.model(input).view(-1)

def training_step(self, batch, batch_idx):

# training_step defines the train loop.

x, y = batch["tweet"], batch["label"]

pred = self(x)

loss = self.criterion(pred, y.float())

self.train_accuracy(pred, y)

self.log('train_acc', self.train_accuracy, on_step=True, on_epoch=True, prog_bar=True)

self.log('train_loss', loss, on_step=True, on_epoch=True, prog_bar=True)

return loss

def validation_step(self, batch, batch_idx):

# this is the validation loop

self._shared_eval(batch, batch_idx, "val")

def test_step(self, batch, batch_idx):

# this is the test loop

self._shared_eval(batch, batch_idx, "test")

def _shared_eval(self, batch, batch_idx, prefix):

x, y = batch["tweet"], batch["label"]

pred = self(x)

loss = self.criterion(pred, y.float())

if prefix == "val":

self.val_accuracy(pred, y)

self.log(f"{prefix}_acc", self.val_accuracy, on_step=True, on_epoch=True, prog_bar=True)

if prefix == "test":

self.test_accuracy(pred, y)

self.log(f"{prefix}_acc", self.test_accuracy, on_step=True, on_epoch=True, prog_bar=True)

self.log(f"{prefix}_loss", loss, on_step=True, on_epoch=True, prog_bar=True)

def predict_step(self, batch, batch_idx, dataloader_idx=0):

x, _ = batch["tweet"], batch["label"]

pred = self(x)

return pred

def configure_optimizers(self):

optimizer = Adam(self.model.parameters(), lr=self.learning_rate)

return optimizer

The LightningModule above serves as the backbone of our sentiment analysis classifier. Let’s take a closer look at its components.

The TweetSystem class begins by initializing our model, tokenizer, learning rate, and accuracy metrics that we want to track.

The forward method takes a list of tweets as input, converts them into sequences of numbers of the same length using our tokenizer, and feeds them to our model.

The training_step, validation_step, and test_step methods each carry out a single pass of data, operating on one batch at a time. Each step performs a forward pass calculates the loss and updates the corresponding accuracy metric. In the training step, this loss is used to update our model’s parameters.

Finally, the configure_optimizers method is where we define the optimizer that will be used to update our model’s parameters based on the calculated loss.

Now that we have covered the key components of our sentiment analysis pipeline, including the data module, the model architecture, and the PyTorch Lightning module, it’s time to bring everything together and define the training script.

The training script serves as the entry point for training our sentiment analysis classifier orchestrating the entire training process, including data loading and preprocessing, model initialization, training, and evaluation.

The training process looks as follows:

import os

import sys

from logging import configfrom lightning.pytorch import Trainer

from lightning.pytorch.callbacks import TQDMProgressBar, EarlyStopping, ModelCheckpoint

from lightning.pytorch.loggers import TensorBoardLogger

from torchtext.vocab import GloVe

from tqdm import tqdm

from ml.datasets.tweet_datamodule import TweetDataModule

from ml.engines.system import TweetSystem

from ml.models.lstm import LSTMClassifier

from ml.utils.constants import EXPERIMENTS_DIR, ROOT_DIR, EMBEDDINGS_DIR

from ml.utils.tokenizer import Tokenizer

tqdm.pandas(file=sys.stdout)

def get_callbacks():

tqdm_callback = TQDMProgressBar(refresh_rate=1)

checkpoint_callback = ModelCheckpoint(save_last=True, save_top_k=1,

filename="best-loss-model-{epoch:02d}-{val_loss:.2f}",

monitor="val_loss",

mode="min")

checkpoint_callback.CHECKPOINT_NAME_LAST = "last-model-{epoch:02d}-{val_loss:.2f}"

early_stopping_callback = EarlyStopping(monitor="val_loss", mode="min", patience=5, verbose=True)

return [tqdm_callback, checkpoint_callback, early_stopping_callback]

def train():

# Setup the data module

# This will take care of downloading the data, preprocessing it,

# splitting it into train/val/test sets, and encoding the labels

tweet_data_module = TweetDataModule()

tweet_data_module.prepare_data()

tweet_data_module.setup(stage="fit")

pretrained_embeddings = GloVe(cache=EMBEDDINGS_DIR, name='twitter.27B', dim=100)

# Create the Tokenizer vocab based on the pretrained embeddings and the training data

tokenizer = Tokenizer()

tokenizer.fit_on_texts_and_embeddings(tweet_data_module.train_data.text, pretrained_embeddings)

embeddings_matrix = tokenizer.get_embeddings_matrix(pretrained_embeddings)

model = LSTMClassifier(embeddings_matrix)

tweet_system = TweetSystem(model=model, tokenizer=tokenizer)

tb_logger = TensorBoardLogger(EXPERIMENTS_DIR, name=model.name)

output_dir = os.path.join(EXPERIMENTS_DIR, model.name, f"version_{tb_logger.version}")

tokenizer.save(output_dir)

trainer = Trainer(devices="auto",

accelerator="auto",

max_epochs=10,

callbacks=get_callbacks(),

logger=tb_logger,

log_every_n_steps=1

)

trainer.fit(model=tweet_system, datamodule=tweet_data_module)

if __name__ == "__main__":

train()

By running the training script, our sentiment analysis model will be trained using the specified data and settings. Throughout the training process, PyTorch Lightning takes care of logging metrics, checkpointing, and other training details, making it a convenient and efficient way to train our sentiment analysis model.

Once the model is trained we can use one of the saved checkpoints to load it and utilize it for testing and making predictions. Our model achieves an accuracy of 80%, which is quite satisfactory given the simple architecture we employed and without undergoing any tuning.

You can check out the repository which includes a test script that demonstrates how to load a trained model and evaluate its performance on the test set.

In this post, we have explored the process of building a sentiment analysis classifier using PyTorch and PyTorch Lightning. By utilizing the Lightning DataModule, we seamlessly handled all the data-related tasks, while the LightningModule encapsulated our model and handled the training, evaluation, and testing processes. This abstraction allowed us to concentrate on the essential aspects of our sentiment analysis task without worrying about the underlying implementation details.

By following this tutorial, you now have the knowledge and tools to build your own machine learning system using PyTorch and PyTorch Lightning. From sentiment analysis to other complex tasks, consider this project and its structure as a foundational guide and adapt it to build machine learning systems tailored to your specific needs.

In the next part, we’ll delve into how we can expose our trained model as an API for making predictions. Stay tuned!

You can find this project on my Github at this repository. If you have any questions or suggestions, I’d love to hear them! Please don’t hesitate to leave a comment — it’s always great to have a chat and discuss these topics further.