Building of a Neural Network (short article).

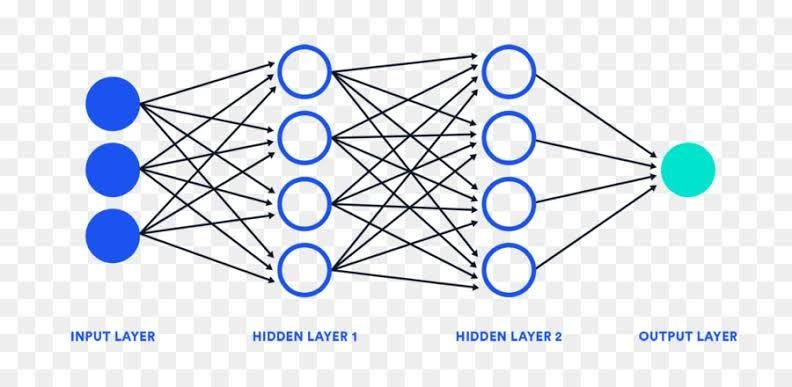

Building of a neural network model, yes it’s actually inspired by the biological thing which is inside our brains, but things are not the same here, we can build this using Forward Propagation and Backward Propagation.

Forward propagation is the process of moving an input through a neural network to produce an output. During forward propagation, the input is multiplied by weights and biases, and passed through activation functions to produce an output.

Backward propagation, also known as backpropagation, is a process used to train neural networks. During backpropagation, the neural network calculates the difference between its predicted output and the actual output, and uses this information to adjust its weights and biases. This process is repeated multiple times until the neural network’s predictions are accurate.

Now the question arises which is best? forward propagation or backward propagation ?Both forward propagation and backward propagation are important for training neural networks. Forward propagation is used to produce an output given an input, while backward propagation is used to adjust the neural network’s weights and biases to improve its predictions. Without forward propagation, we wouldn’t be able to produce an output given an input, and without backward propagation, we wouldn’t be able to train the neural network to improve its predictions.